Right now, somewhere on the internet, someone is having a live conversation with an AI.

Not typing into a chatbox. Not listening to a robotic voice. A real conversation - face to face, in real time - with something that looks like a person, sounds like a person, and responds like a person.

And for a moment, they probably forgot it wasn't human.

I've spent the last year building applications with this technology. I've watched it go from a party trick to something that's genuinely reshaping how companies communicate, how people learn, how businesses scale. And almost nobody is talking about it in plain English.

So that's what this post is.

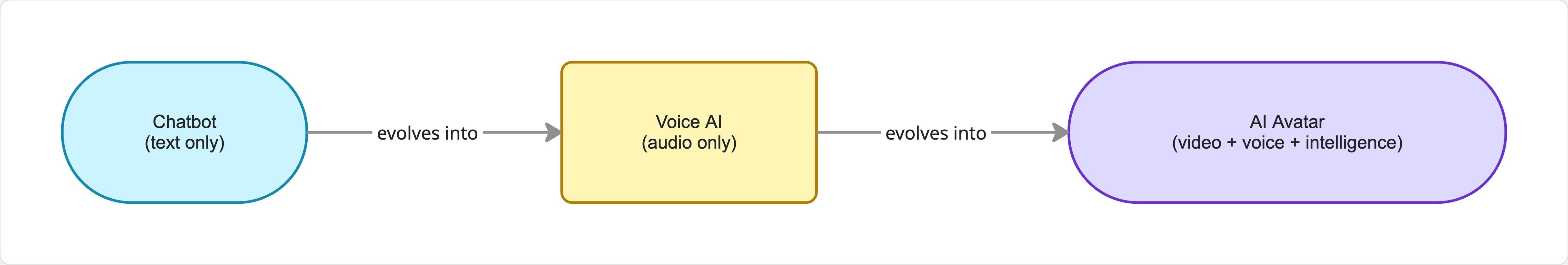

The Spectrum Nobody Explains Clearly

When most people hear "AI avatar," they picture a cartoon character or a video game skin. That's not what I'm talking about.

The AI avatars that matter right now are interactive digital humans — and they exist on a spectrum.

On one end: a photo that's been animated to speak a script. Cheap, scalable, slightly uncanny. You've probably seen these in corporate training videos.

On the other end: real-time interactive avatars. These aren't playing back a pre-recorded clip. They're responding to you, live. You ask a question, the avatar thinks — using the same AI models that power ChatGPT — and answers in under a second. With a face that tracks your attention. With a voice that carries emotion. With natural eye contact and subtle head movement.

The gap between those two ends is enormous. And in the past twelve months, the industry has sprinted toward the second one.

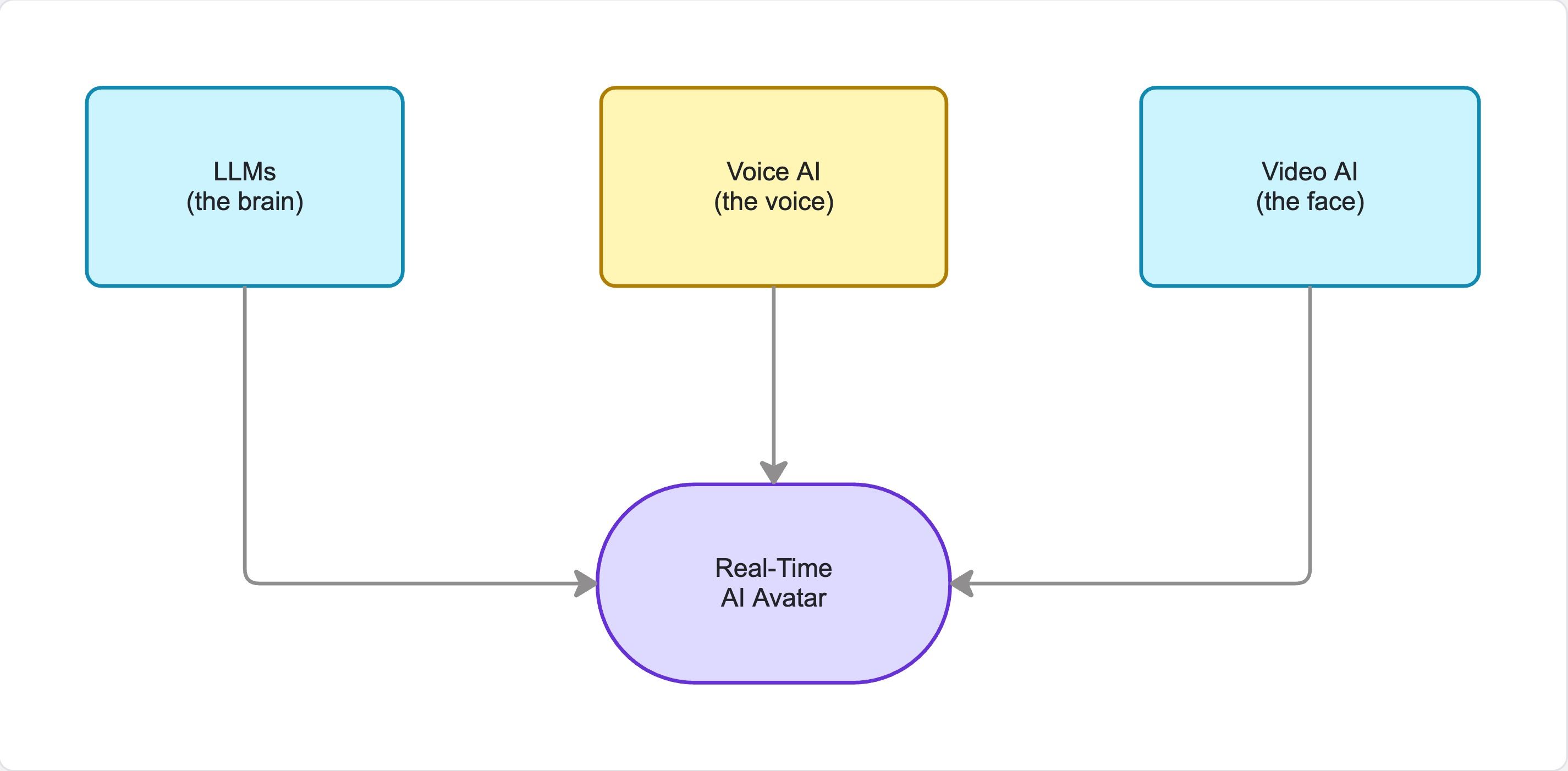

Why This Is Possible Now: Three Technologies Colliding

This isn't magic. It's three completely separate technologies — developed independently — finally reaching the point where you can combine them in real time.

1. Large Language Models (the brain) ChatGPT, Claude, Gemini — these can now hold a conversation, answer questions, follow a personality, and respond intelligently in under a second.

2. Voice AI (the voice) ElevenLabs and others have gotten text-to-speech so good it's basically indistinguishable from a human voice — and critically, it's fast. Under 200 milliseconds from text to audio.

3. Video AI (the face) This was the hardest piece. Taking audio and turning it into a synchronized, expressive face — in real time, at low latency. This is the one that only cracked in the last year or two.

Combine those three and you have something that genuinely didn't exist three years ago.

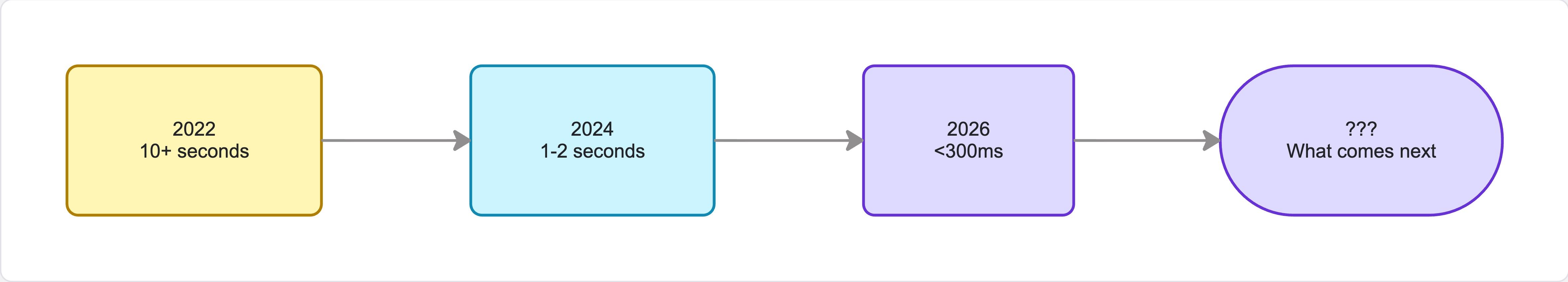

The Latency Problem (And Why It's Solved)

Here's why this is a 2026 conversation and not a 2020 conversation: latency.

You know how you know something is fake on a video call? It's usually not the picture quality — it's the lag. A half-second delay breaks the illusion immediately.

For interactive AI avatars to feel real, the full loop — you speak, AI understands, generates a response, converts to voice, animates a face — has to happen in under 300 milliseconds. That's the human perception threshold.

2022: 10+ seconds. Unusable.

2024: 1–2 seconds. Awkward but directional.

2026: 100–300ms. Right at the threshold.

When you're at the threshold, use cases unlock almost overnight.

To show how fast this space is moving: just days ago, Runway — one of the most respected AI labs in the world — launched Characters, a real-time video agent API. Give it any image, it becomes an interactive avatar. No training. Instant. When a company with that research depth enters a space that was mostly small startups a year ago, that's a signal the technology has matured.

Where It's Already Deployed

This isn't hypothetical. It's live right now:

Customer service — Video avatars replacing text chatbots. Same information, completely different experience.

Training & onboarding — An AI version of your company's best trainer, available 24/7 in any language.

Sales outreach — Personalized video messages, customized by AI for every recipient at scale.

Healthcare — AI avatar intake assistants handling the administrative, repetitive parts of patient intake.

Digital preservation — Companies helping families create conversational digital twins of people they've lost. Complex territory ethically — but it shows how deep this goes.

What Comes Next

Every major shift in how we use the internet follows the same arc: capability emerges → first adopters build niche apps → quality and cost hit a threshold → mainstream adoption → it's everywhere.

Static websites. Then search. Then social. Then voice assistants.

The next layer is websites with faces.

Not every website. Not immediately. But the same way a chat widget became standard for customer-facing businesses — interactive video agents will become a standard layer of the web.

The most important part: the APIs that power this are now cheap enough and fast enough that you don't need to be a major corporation to build with it. Individuals, small teams, early-stage startups — you can build with this right now.

Want to Go Deeper?

I covered all of this in my latest video with a full visual walkthrough - including the actual platforms, latency numbers, and where I see Akapulu fitting into this picture.

I'm building in this space actively and sharing everything I learn in real time — the tools worth your attention, the ones that aren't, the use cases that work, and the ones that fall apart in production.

If that's useful to you, subscribe and drop your questions in the comments. I read every one.

-James